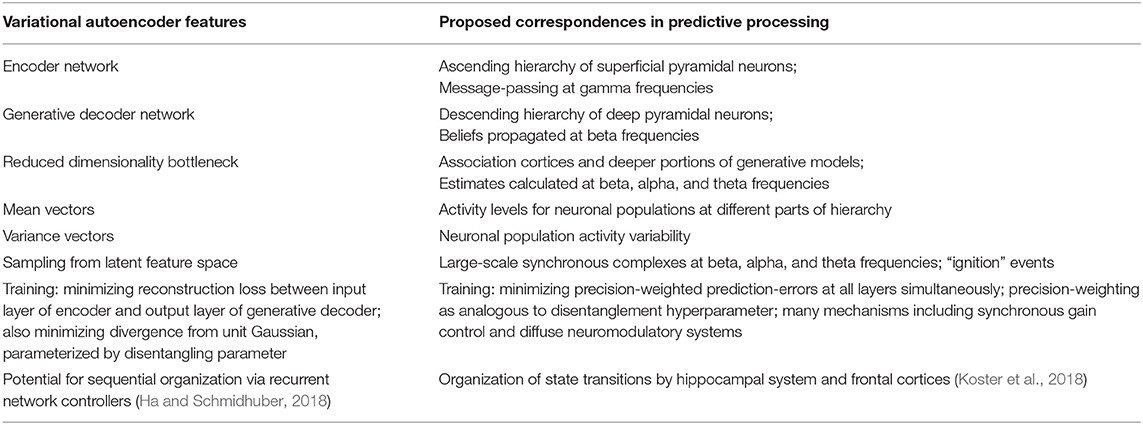

We then describe how VB learning delivers a factorized, minimum KL-divergence approximation to the true posterior density in which learning is driven by an explicit minimization of the free energy. Before this, we review the salient properties of the KL-divergence. We describe the fundamental relationship between model evidence, free energy and Kullback-Liebler (KL) divergence that lies at the heart of VB. It is now also widely used in the analysis of neuroimaging data ( Penny et al., 2003 Sahani and Nagarajan, 2004 Sato et al., 2004 Woolrich, 2004 Penny et al., 2006 Friston et al., 2006). VB is a development from the machine learning community ( Peterson and Anderson, 1987 Hinton and van Camp, 1993) and has been applied in a variety of statistical and signal processing domains ( Bishop et al., 1998 Jaakola et al., 1998 Ghahramani and Beal, 2001 Winn and Bishop, 2005). The VB approach, also known as ‘ensemble learning’, takes its name from Feynmann's variational free energy method developed in statistical physics. This chapter describes an alternative framework called ‘variational Bayes (VB)’ which is computationally efficient and can be applied to a large class of probabilistic models ( Winn and Bishop, 2005).

But MCMC is computationally intensive and so not practical for most brain imaging applications. Friston, in Statistical Parametric Mapping, 2007 INTRODUCTIONīayesian inference can be implemented for arbitrary probabilistic models using Markov chain Monte Carlo (MCMC) ( Gelman et al., 1995).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed